Have you ever wondered what really happens when you try to sign up for a new app or service and see that annoying message: "This username is already taken"? It might seem like a small thing, but the technology behind those super-fast checks is actually very complex.

In this blog post, we’ll break down the clever systems and tools that big tech companies like Google, Meta, and Amazon use to handle billions of username checks quickly and reliably. We’ll look at how fast in-memory caching with Redis hashmaps works, how B+ trees help organize huge amounts of data, and how Bloom filters give lightning-fast "yes or no" answers.

But it doesn’t stop there. These companies don’t rely on just one tool they layer and combine these technologies in smart ways to build strong, multi-level systems. By using load balancing, distributed databases, and a mix of fast caches and main data sources, they can handle massive traffic from around the world.

Next time you see the "username taken" message pop up in just a split second, you’ll know there’s a lot of engineering power working behind the scenes to make it happen so smoothly.

The Need for Speed: Why Simple Database Lookups Aren't Enough

When you sign up for a new app or service, checking if your chosen username is available might seem like a simple task. You might think it’s just one quick database query. But in reality, especially for big platforms with billions of users, it’s much more complicated.

Imagine you want to create an account on a popular social media app with the username "bytemonk." For a small app, a basic database check might work fine. But for a huge, global platform, relying on one database to handle millions (or even billions) of username lookups creates big problems. It causes slow response times, delays, and puts too much load on the system.

To solve this, tech giants use a smart, multi-layered system for checking usernames. They mix different advanced data structures and distributed systems to make it work. By combining these technologies, they can deliver super-fast checks, handle massive amounts of traffic, and keep everything running smoothly even as the number of users keeps growing around the world.

The Building Blocks: Key Data Structures for Efficient Username Lookups

At the core of these high-performance username systems are several powerful data structures, each with its own unique strengths and use cases. Let’s explore these key players and see how they work together to make the overall system fast and efficient:

Redis Hashmaps: Blazing-Fast In-Memory Lookups

One of the first tools used in a username check system is the Redis hashmap. It’s a powerful and super-fast in-memory data structure. Redis hashmaps let you store many key-value pairs under one key, making them great for quickly checking recent usernames.

Here’s how it works: each field in the hashmap is a username, and its value could be something simple, like a user ID or a flag showing it’s taken. When someone checks if a username is available, the system looks in this hashmap first. If it finds the username, it means it’s already taken, and Redis can give an instant answer with no need to check the database.

Redis hashmaps are amazing for exact matches and give very fast responses most of the time. But they do have a limit: you can’t keep billions of usernames in one Redis instance because memory is limited. That’s why other data structures are used for bigger and more complex checks.

Tries (Prefix Trees): Efficient Prefix-Based Lookups and Autocomplete

While Redis hashmaps are great for quick yes/no checks, what if you want to do more? For example, suggest similar usernames or find all names that start with a certain prefix. This is where tries, also called prefix trees, come in.

A trie is a tree-like data structure that organizes words by their common prefixes. Instead of storing each username as a full word, a trie breaks it down letter by letter and builds a path through the tree. This makes lookups fast, taking only as long as the length of the word, no matter how many usernames there are in total.

A big advantage of tries is that usernames with the same starting letters share the same path, which saves space. For example, "bytemonk" and "bytemonkey" both follow the "bytemon" branch before splitting off, making the structure more efficient.

Tries are very helpful for prefix-based searches and autocomplete suggestions important when a user's first choice of username is already taken. By moving through the trie, the system can quickly suggest other options or show all usernames starting with a certain prefix.

However, tries also have limits. They can use a lot of memory, especially if usernames don’t share many letters. To solve this, systems often use compressed versions like radix tries or only use tries for the most common or recently searched usernames.

B+ Trees: Scalable Indexing for Massive Datasets

When it comes to storing and searching large, sorted sets of data especially in traditional databases another powerful data structure is used: the B+ tree.

B+ trees (and their close cousin, B trees) are widely used in relational databases to index fields like usernames. These trees keep keys in order and allow fast searches in O(log n) time, where n is the number of entries. This means that even with billions of usernames, the system might only need about 30 steps to find one, thanks to the high branching factor of each node.

B+ trees also make it easy to do range queries, like finding the next available username alphabetically. This is useful in cases where order matters, which is often true for username systems.

However, as the data grows into billions of entries, it becomes harder to maintain the B+ tree’s performance on a single machine. This is where distributed databases like Google Cloud Spanner help. They split the sorted data across many machines and copies (replicas), allowing the system to scale out smoothly and handle huge workloads.

Bloom Filters: Blazing-Fast Probabilistic Checks

While Redis hashmaps, tries, and B+ trees are all powerful on their own, there’s one more data structure worth highlighting: the Bloom filter.

Bloom filters are smart, probabilistic data structures made for one main job to check if an item might be in a set, using very little memory. Here’s how they work:

- A Bloom filter is a bit array combined with a few hash functions.

- When you add a username, it’s hashed several times, and each hash sets certain bits in the array.

- To check if a username is there, you hash it the same way and see if all the related bits are set to 1. If even one bit is 0, the username is definitely not in the set.

The great thing about Bloom filters is that they never give false negatives if they say something isn’t there, it truly isn’t. The trade-off is that they might give false positives (saying an item is there when it isn’t), but this is usually acceptable since it saves the system from expensive database lookups.

Bloom filters save a huge amount of space. For example, to handle 1 billion usernames with only a 1% false positive rate, you'd need about 1.2 GB of memory far less than storing all the full keys directly.

That’s why large systems like Apache Cassandra use Bloom filters to avoid unnecessary disk checks. In some cases, companies even keep a global Bloom filter of all taken usernames in memory, so most checks can be answered instantly without putting extra load on caches or databases.

Combining the Pieces: A Multi-Layered Architecture for Scalable Username Checks

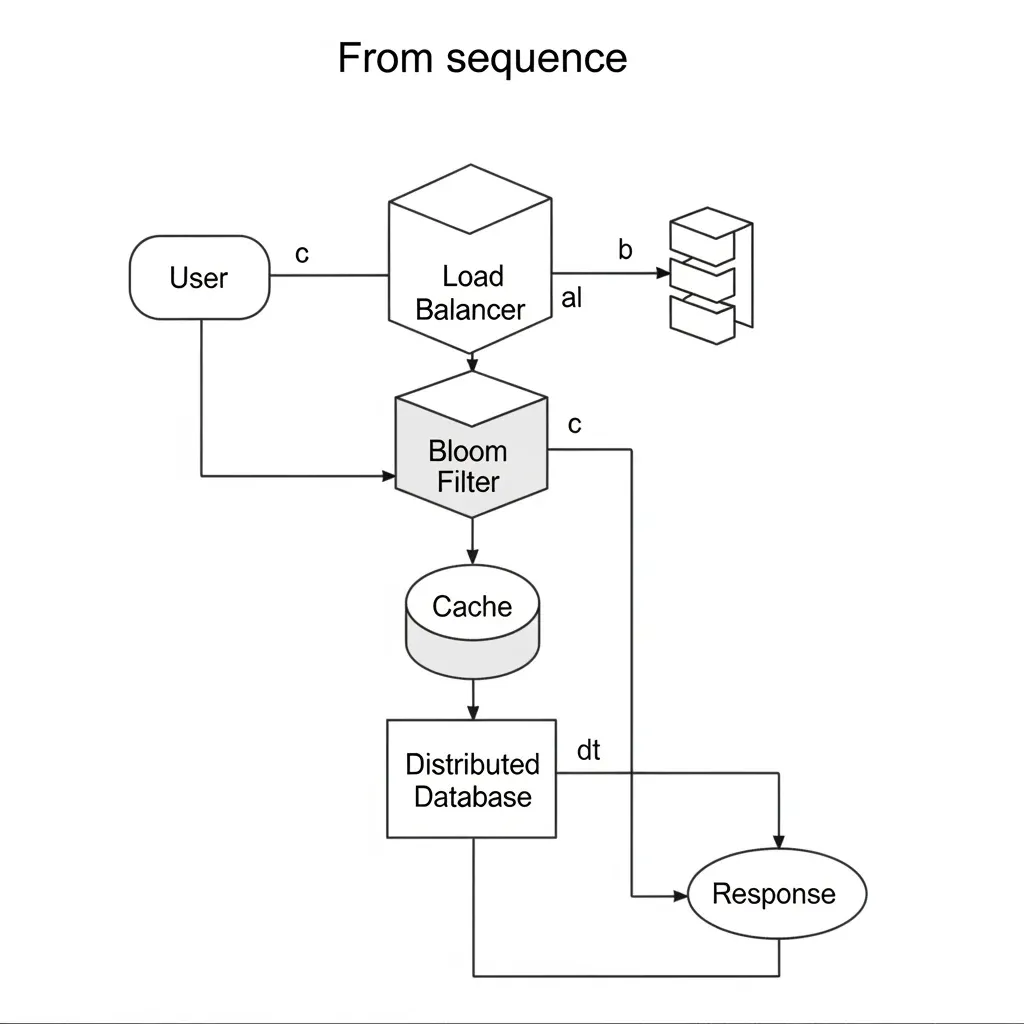

Now that we've looked at the individual data structures behind username lookup systems, let’s see how they all work together in a real-world, large-scale architecture.

With billions of users and heavy global traffic, tech giants like Google, Meta, and Amazon don’t rely on just one data structure or method. Instead, they carefully combine and layer different technologies to achieve maximum speed, reduce memory usage, and keep the load on their databases as low as possible.

Load Balancing: Directing Traffic to the Right Locations

The first step in this multi-layered approach is smart load balancing. In large distributed systems, load balancing usually happens at two levels: global and local.

- Global load balancing (also called edge-level load balancing) uses DNS-based or anycast routing to send user requests to the closest regional data center. For example, a user in Europe would be directed to a data center in the EU instead of one in the US.

- Local load balancing happens inside each data center. It spreads incoming traffic across multiple backend servers or service instances, using tools like Nginx or AWS Elastic Load Balancing (ELB).

By routing traffic to the nearest and most available resources, this approach helps reduce delays (latency) and makes sure the system runs efficiently.

The Bloom Filter: The First Line of Defense

Once the request reaches the backend servers, the first stop is usually a Bloom filter. In this setup, the Bloom filter isn’t a separate server, it's a small, fast in-memory data structure that lives inside each application or query server.

Each backend server keeps its own copy of the Bloom filter, which is updated regularly from a central source or rebuilt from the database. When a username lookup request comes in, it first checks the Bloom filter acting like the system’s "bouncer."

The Bloom filter can instantly say if a username definitely doesn’t exist, helping the system skip expensive database checks. If the Bloom filter isn’t completely sure, the request moves on to the next layer for further checking.

The In-Memory Cache: Blazing-Fast Lookups

After the Bloom filter, the next layer is an in-memory cache, often powered by Redis or Memcached. This cache works like a "destroyer" ; it keeps recently used data close at hand, ready to be accessed in microseconds.

If the username was checked recently, the cache will have the answer, and the system can reply instantly. But if it’s not in the cache (a cache miss), the request then moves to the final layer: the distributed database.

The Distributed Database: The Authoritative Source

The final, authoritative check for username availability happens in the distributed database layer. Tech giants often use databases built for massive scale, such as Apache Cassandra or Amazon DynamoDB.

These databases smartly split data across thousands of machines using techniques like consistent hashing. This spreads the load evenly and allows for extremely fast lookups, even when dealing with billions of usernames.

Once the database confirms whether the username exists or not, the application server sends the final result back through the load balancers to the user.

Real-World Examples: How Tech Giants Implement Scalable Username Systems

Now that we've explored the individual components and the overall architecture, let’s see how some tech giants use these systems in real life:

Google: Leveraging Spanner for Scalable Indexing

Google’s distributed database, Cloud Spanner, is a great example of how to handle huge, sorted datasets like usernames. Spanner spreads the sorted data (backed by B-tree-like structures) across many machines and replicas, allowing it to scale horizontally and still handle millions of queries per second with low delay.

By building their username system on Spanner, Google can easily manage updates and keep everything synced across different servers, even with billions of users.

Meta (Facebook): Combining Bloom Filters and Cassandra

At Meta (formerly Facebook), the username lookup system likely uses a mix of Bloom filters and Apache Cassandra, a NoSQL database known for its scalability and high availability.

Meta probably keeps a global Bloom filter of all taken usernames in memory, so most lookups can be filtered instantly before going to Cassandra. Then, Cassandra’s ability to distribute data across many machines makes it possible to support huge platforms like Instagram.

Amazon: Leveraging DynamoDB and Route 53 for Global Reach

Amazon’s username checks likely use Amazon DynamoDB, a fully managed NoSQL database, along with Amazon Route 53, its global DNS service.

DynamoDB’s distributed design and auto-scaling make it perfect for handling billions of usernames. Meanwhile, Route 53 helps direct user requests to the nearest available data center, reducing delays for customers worldwide.

By combining these services, Amazon can create a highly scalable, reliable, and globally accessible username lookup system to support its massive range of products and services.

Conclusion: The Unsung Heroes Behind the "Username Taken" Message

The next time you see the message "This username is already taken," remember it’s the result of a complex, multi-layered system working hard behind the scenes. From the lightning-fast caching of Redis hashmaps to the scalable power of B+ trees and the smart, space-saving magic of Bloom filters, tech giants have built impressive ways to handle username checks on a global scale.

By carefully combining these data structures, using distributed databases, and applying smart load balancing, companies like Google, Meta, and Amazon make sure your username check happens in just milliseconds, even with billions of users worldwide.

So, the next time you sign up for a new app or service, take a moment to appreciate the real heroes of the tech world: the data structures, distributed systems, and clever designs that make these quick checks possible. It’s a true example of how powerful and creative real-world engineering can be.

If you enjoyed this breakdown, check out our related content on system design, distributed systems, and AWS. And don’t forget to subscribe for more deep dives into the fascinating world of technology and engineering!